This is a guest post by Julie Allinson, Technology Development Manager for Library & Archives at York. Julie has been working on York's implementation for the 'Filling the Digital Preservation Gap' project. This post discusses preliminary work to define a data model for 'datasets'.

For Phase three of our 'Filling the Digital Preservation Gap', I've been working on implementing a prototype to illustrate how PURE and Archivematica can be used as part of a Research Data management lifecycle. Our technology stack at York is Hydra and Fedora 4 but it's an important aspect of the project to ensure that the thinking behind the prototype is applicable to other stacks. Central to adding any kind of data to a research information system, repository or preservation tool is the data model that underpins the metadata. For this I've been making use of the Portland Common Data Model (PCDM) and it's various extensions (particularly Works).

In the past couple of years there has been a lot of work happening around PCDM, described as "a flexible, extensible domain model that is intended to underlie a wide array of repository and DAMS applications". PCDM provides a small Models ontology of classes and properties, with extension ontologies for Works and Use, among others. I like PCDM because it is high level enough to provide a language to talk across different domains and use cases.

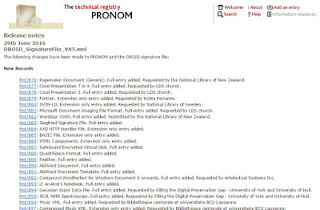

Datasets data model version 1

My first attempt at a data model for datasets based on PCDM can be seen below.

|

| Datasets Data Model v1 |

Starting with the Dataset object above, for York this is equivalent to a dataset record in our PURE research information system. The only metadata expected at this level is about the dataset as a whole, and for us, will largely come from PURE.

Below this, you'll see that I've begun to model in some OAIS constructs: the Archival Information Package (AIP) and Dissemination Information Package (DIP). The AIP is the deposit of data, prepared for preservation, a single package of data "consisting of the Content Information and the associated Preservation Description Information (PDI), which is preserved within an OAIS." (DCC Glossary). The DIP is a representation of the Content Information in the AIP, produced for dissemination. OAIS, by the way, gives no standard approach for structuring the AIP or DIP.As it stands, this model does not consider how to 'unpack' the AIP at all and for our prototype - we are (or were - see below) intending to simply point to the AIP in Archivematica.

The DIP as illustrated above is based on what Archivematica generates. The diagram includes the processing configuration and METS files that Archivematica produces by default as filesets, for illustration. These aren't part of the dataset as deposited, hence not making them 'Works' in their own right.GenericWork is intended for each Unit or 'Work' in the dataset as deposited, or representation thereof. GenericWork is intended for use with any kind of data object. it might be independently re-used, eg. as a member of another dataset. In most cases for research data we probably won't know much about what the data is and so GenericWork will be used, but sometimes it may make sense to use existing models. For example, if the dataset is a collection of images then a more tailored Image model could be used for each Image, or if a dataset includes some existing objects that are already in our repository, those might already have different models. They can still be members of our dataset.

The model is intended to allow for a Dataset to include multiple AIPs and thus I have suggested a local predicate for hasDIP / hasAIP to establish the relationship between the AIP and the DIP.

The trouble with DIPs : an alternative model

Discussing this model with Justin Simpson from Artefactual Systems recently, I got to thinking that the DIP is a really artificial construct, effectively a presentation 'view' on the data and not really separate 'Work' in it's own right. Archivematica's DIP, at present, provides only files, and doesn't reflect the original folder structure, which may well be meaningful and necessary for a dataset. Perhaps what we really need is the AIP, described fully in Fedora, leaving presentation of the DIP to the interface level? A set of rules for producing a DIP to our own local specification might go like this (using the PCDM Use ontology): if there is a Preservation Master file, produce a Service File for user access, otherwise present the Original File to the user.

The new model would look something like this:

|

| Datasets Data Model alternative |

The DIP would be a view constructed from elements of the AIP:

This model feels like a better approach than that in version 1 as it facilitates describing the 'whole' dataset. I do have some immediate questions about the model, though:- Is a dataset really a pcdm:Collection, rather than a Work?

- If the GenericWork is each data file irrespective of whether it can be used/understood on it's own, how useful is that in reality? Is the GenericWork really needed, or are FileSets enough? Is there genuinely value is identifying each individual piece of data as a 'Work'? (re-use outside of the dataset, for example)

And when thinking beyond the model, about how this would actually work for different use cases, implementations questions start to surface.

Beyond the model

1) Dataset size and structure

Datasets may contain thousands, millions even, of files structured into folders where folders may impart meaning to the data, or be purely arbitrary. Fedora 4 can, by design, handle a folder structure using it's implementation of LDP Basic Containers. As illustrated below, each folder is a 'Basic Container' and each data file is a Work, with FileSet and File objects.- AIP ldp:contains folder1

- folder1 ldp:contains folder2

- folder2 ldp:contains folder3

- folder3 ldp:contains GenericWork

- GenericWork pcdm:hasMember FileSet

But if each of those folders are objects in Fedora and each file is in Fedora with a Work, FileSet and several File objects, then the number of Fedora objects begins to rise exponentially - Would it be better to avoid object-cost to Fedora of creating many many objects?

- What alternative approaches could we take? Use BagIt and have Fedora reference only the 'bag'? Store only data files and create an 'order' to represent the folder structure (as outlined in PCDM 2.0)?

2) Storing Files

'Where should data actually be stored permanently?' is another practical question I've been thinking about. On the one hand, Archivematica makes the AIP and it's files available via URLs in the Storage Service and stores the file in a location of your choosing. On the other, Fedora can contain data files, or reference them via a URI. This gives gives us the flexibility to do several things: - Leave the AIP and DIP in Archivematica's stores and use URIs in Fedora to reference all of the files and build a PCDM-modelled view of the data (Archivematica as preservation and access store).

- Manage all files in Fedora, treating the Archivematica copy of the data as temporary (Archivematica as sausage factory).

- Have two copies of AIP data, one in Archivematica and one in Fedora (LOCKSS model).

- Manage Preservation files in Archivematica and delivery/access files in Fedora (Archivematica as preservation and Fedora as access).

Keeping data in Archivematica makes is easy to do additional preservation actions in future, such as re-ingesting when format policy rules change, whereas managing all files within Fedora unlocks the possibilities of Fedora's audit functionality, fixity checking and versioning. Having two copies is attractive as a preservation strategy, but could be difficult to justify and sustain if data collections grow to a significant size.

On balance I think option (4) is best for the short-term with other options worth re-considering as both Archivematica and Fedora mature. But I'd be really keen to hear different views.

Hopefully this post illustrates that creating an outline data model is pretty easy, but when it comes to thinking about it in terms of implementation decisions, all kinds of ifs and buts start coming up.

In the model above, each data file is a Work. Each work contains one or more FileSets, and each FileSet contains one or more different representations of the file.

Is it really possible to define a general datasets model that could encompass data from across disciplines, of various sizes, structures and created for a variety of purposes? Data that might be independently re-usable (a series of oral history interviews) or might only be understandable in combination with other files (a database schema document, for example)?

This is very much a work in progress, and I'd really welcome feedback from others who have done allied work or anyone who has suggestions and comments on the approaches and issues outlined above.

Jenny Mitcham, Digital Archivist